The dramatic growth of cameras and sensors has outpaced our ability to observe and respond to them. We need a breakthrough in our ability to observe, analyze and learn from our physical environments. Hypergiant Sensory Sciences is on a mission to solve this complex problem. How do you teach artificial intelligence to identify, track and predict objects in video footage?

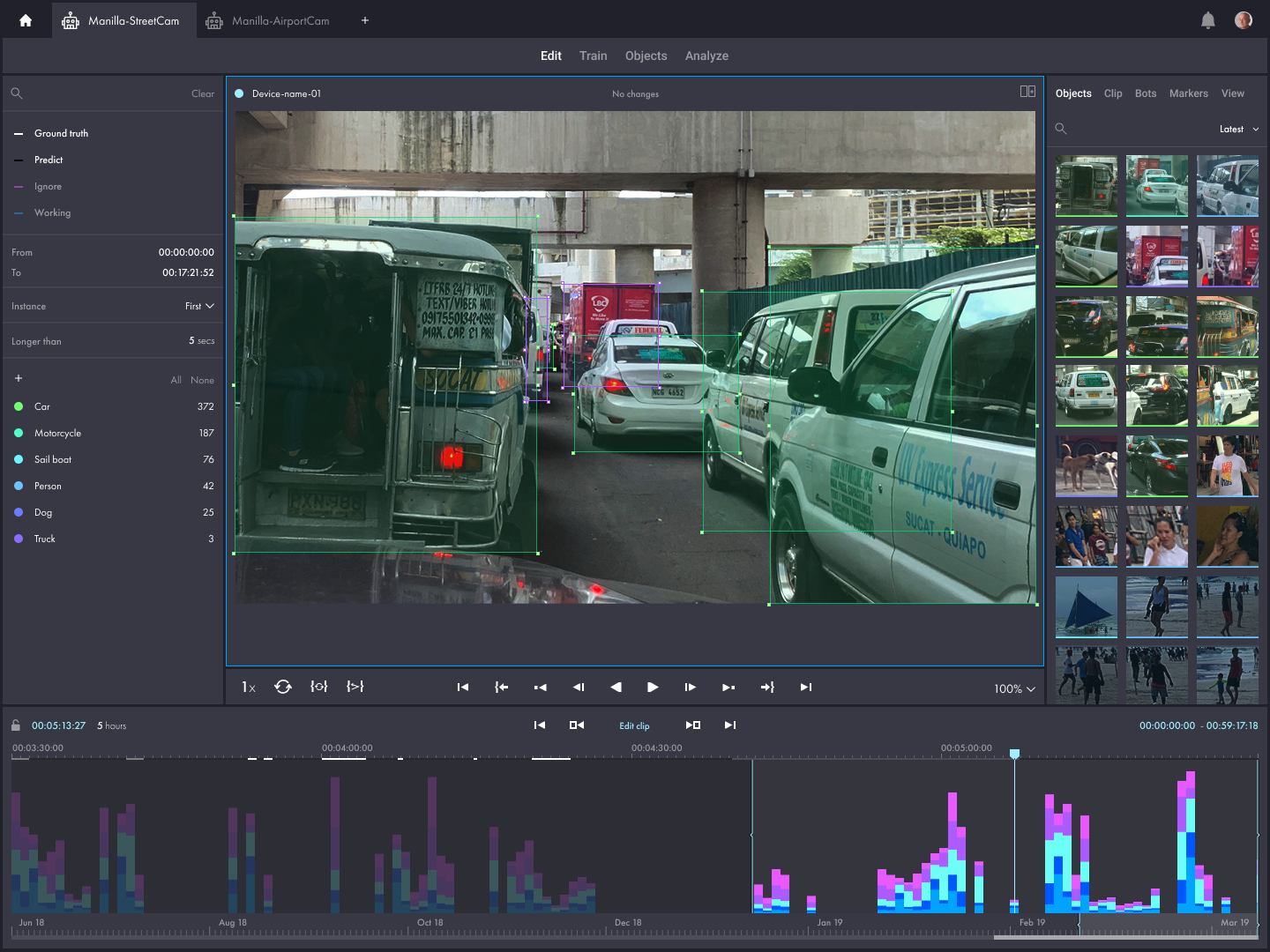

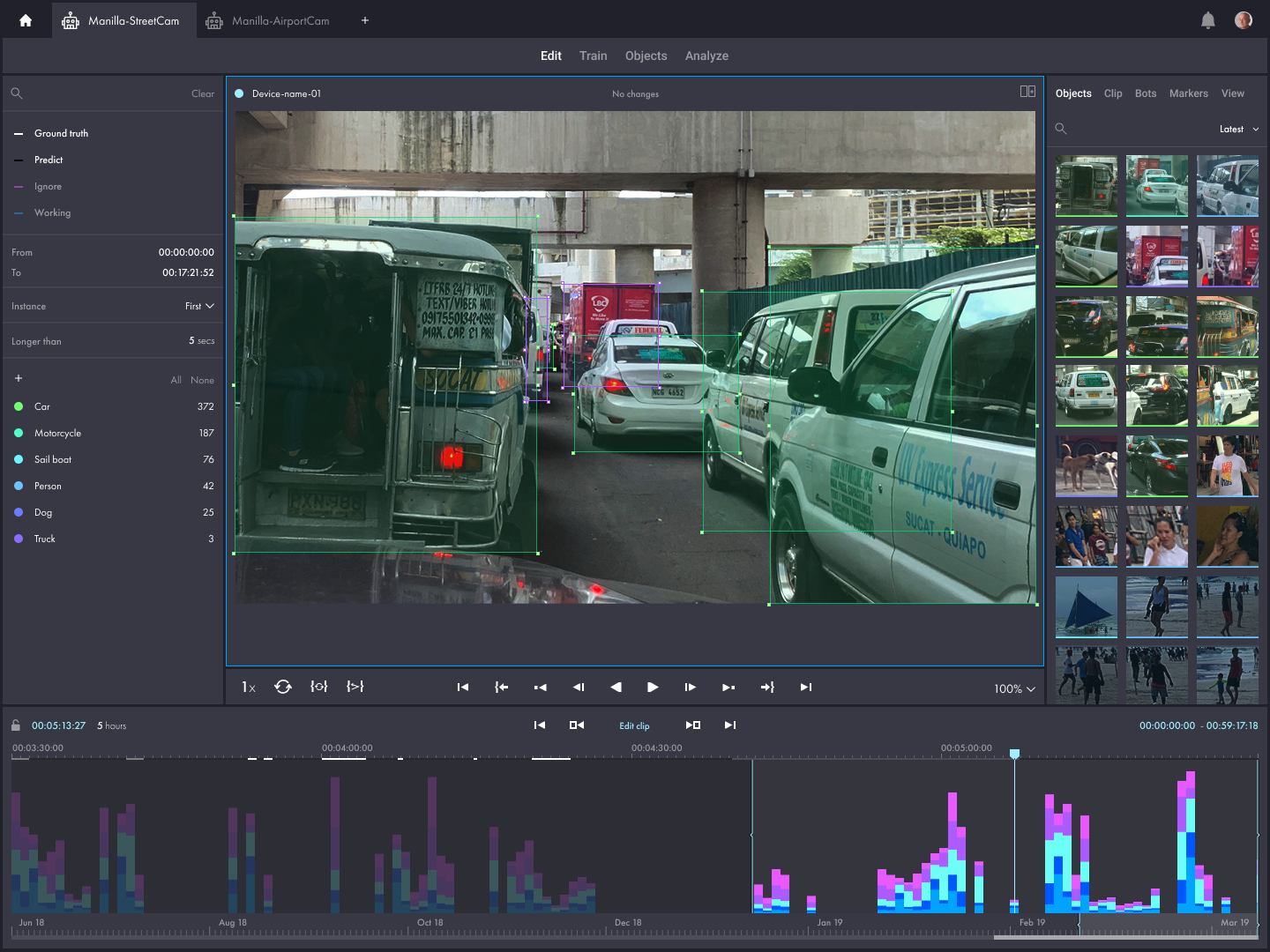

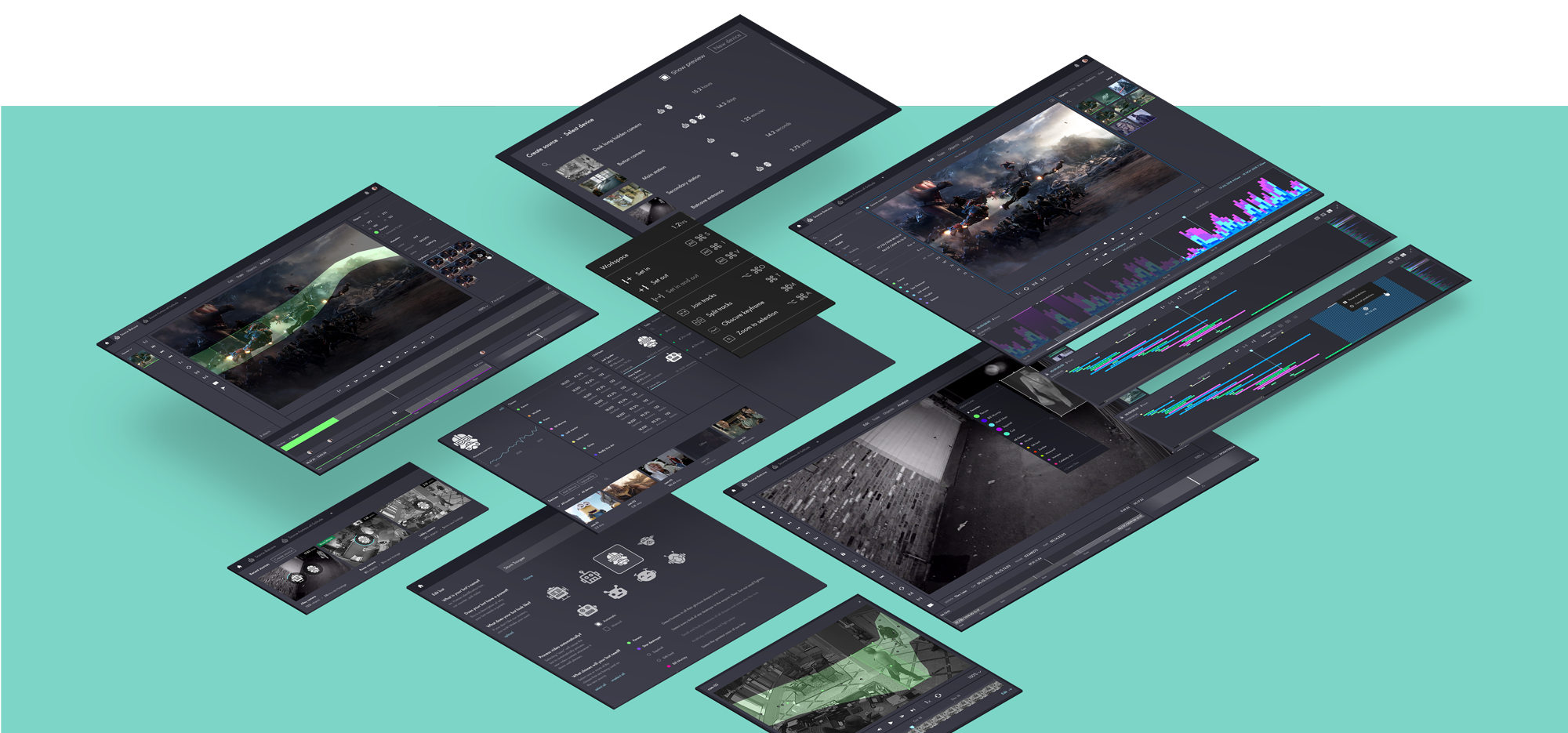

Vega leveraged its experience with machine learning, complex data visualization, and video editing UX to design a platform that is as beautiful as it is powerful. This platform enables users to engage with artificially intelligent bots to quickly identify and track objects in video footage, as well as create custom alerts based on anomalous events.

Hypergiant Sensory Sciences is dedicated to building "sensory perception at scale" powered by machines that can see, sense and alert us when important things happen in our environments. But, even bots require training. Vega was tasked with this challenge. How do you take the complex, large-scale problem of training bots to understand video footage without overwhelming non-technical users?

We love when we get to join a client at the very beginning of a product’s life. Even more exciting is when we are given the opportunity to partner with a client who is building something entirely new. HyperGiant’s product is truly novel, addressing an entirely new set of challenges and opportunities. In the absence of exemplar tools in the market, we are afforded the challenging and exciting opportunity to invent.

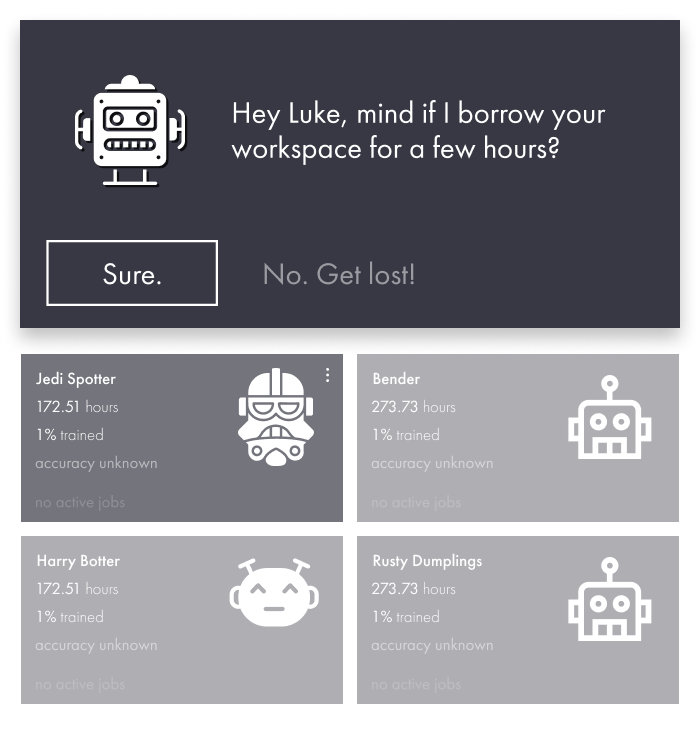

Sensory Sciences is all about augmented intelligence; that is, the idea that AI and humans together can accomplish something that neither could on their own. Thoughtful UX is key to facilitating this; simplifying complex concepts like training objectives and “ground truth,” as well as communicating when the human has completed their work and the machine intelligence is taking over. Sometimes the two need to work side-by-side. To address these challenges, we brought in the concept of bots—artificially intelligent agents that function inside the app just like other users, with avatars and personalities all their own.

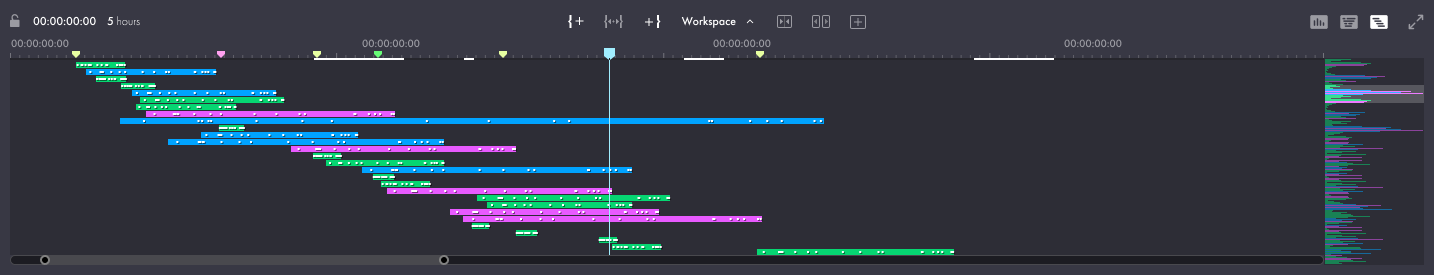

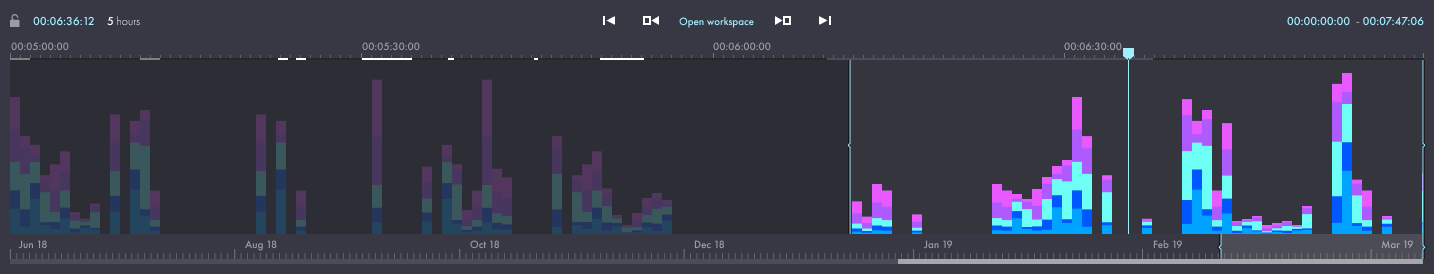

The timeline component is the user's primary form of navigation for the training and editing workflows. There were three areas of the timeline that proved particularly challenging from a UX perspective.

It is possible for video sources like a security camera can have years worth of recorded video stored in the system. This creates significant user experience problems when attempting to scrub and edit information across such a large timeline. We solved these issues using a combination of timeline elements within a single timeline component. The solution works well because each timeline action is very different and does not require the same kind of overlap to provide context.

Let's say there is a security camera positioned in the center of a mall that averages 5,000 people per day and the system is tracking people. That's nearly 2 million tracked objects for one year of data. Navigating this many objects can be challenging. In a timeline where every object is given it's own dedicated slice of vertical real estate, this creates a usability nightmare that scrolling can't solve. We addressed this problem by enforcing limits on the amount of video a user is able to edit at a given time. This also provided an opportunity to reduce the amount of functionality exposed to the user while in edit mode, making for a much simpler experience.

Customers will typically have multiple users using the system concurrently and bots are typically set to automatically consume and process video. The best way to prevent collisions between users and bots is to enforce the concept of a workspace and create workflows that prevent users from making edits without first defining the workspace. Once the user has finished making changes, they will be prompted to save and release. Other users and bots will also have the ability to request access to portions of video that are in-use.

Our team works collaboratively with founders, engineers and product development teams to design great products. Want to build something great together?

Drop us a line